Have you ever considered having a conversation with an intelligent computer? Or perhaps you’re interested in creating innovative projects with AI? Well, take a look at LLama2 in Laravel! It’s the newest brilliant computer program developed by Meta AI, a division of Meta (formerly known as Facebook).

What is LLama2

Llama 2 is a family of pre-trained and fine-tuned large language models (LLMs) released by Meta AI in 2023. It is released free of charge for research and commercial use, Llama 2 AI models can do a variety of natural language processing (NLP) tasks, from content to programming code.

What is Ollama

Ollama allows you to run open-source large language models, such as Llama 2, locally.

Ollama bundles model weights, configuration, and data into a single package, defined by a Modelfile. It optimizes setup and configuration details, including GPU usage.

For a complete list of supported models and model variants, see the Ollama model library.

Setup

First, follow these instructions to set up and run a local Ollama instance:

1) Download and install Ollama onto the available supported platforms (including Windows Subsystem for Linux)

2) Fetch available LLM model via ollama pull <name-of-model>

-

- View a list of available models via the model library

- e.g., for

Llama-7b:ollama pull llama2

3) This will download the default tagged version of the model. Typically, the default points to the latest, smallest-sized-parameter model.

On Mac, the models will be download to

~/.ollama/modelsOn Linux (or WSL), the models will be stored at

/usr/share/ollama/.ollama/models.View the GitHub Repository for more commands. Runollama helpin the terminal to see available commands too.

Llama 2 vs. LLaMa 1

1) Greater context length: Llama 2 models provide a context length of 4,096 tokens, which is almost double the LLaMa 1. This allows for greater complexity of natural language.

2) Greater accessibility: Whereas LLaMa 1 was released for research use, Llama 2 is available with fewer than 700 million active users.

3) More robust training: Llama 2 was pre-trained on 40% more data, increasing its knowledge and understanding.LLaMa 1, and Llama 2 were fine-tuned.

LLama2 Installation

In Windows: https://ollama.com/download/windows

For Mac: https://ollama.com/download/mac

For Linux:

Install it using a single command:

curl -fsSL https://ollama.com/install.sh | sh

Ollama Supports a list of models available on Ollama Library

Please keep in mind that running the 7B models requires a minimum of 8 GB of RAM, while the 13B models need 16 GB, and the 33B models need 32 GB.

After Ollama installation, you can easily fetch any models using a simple pull command.

Ollama pull llama2

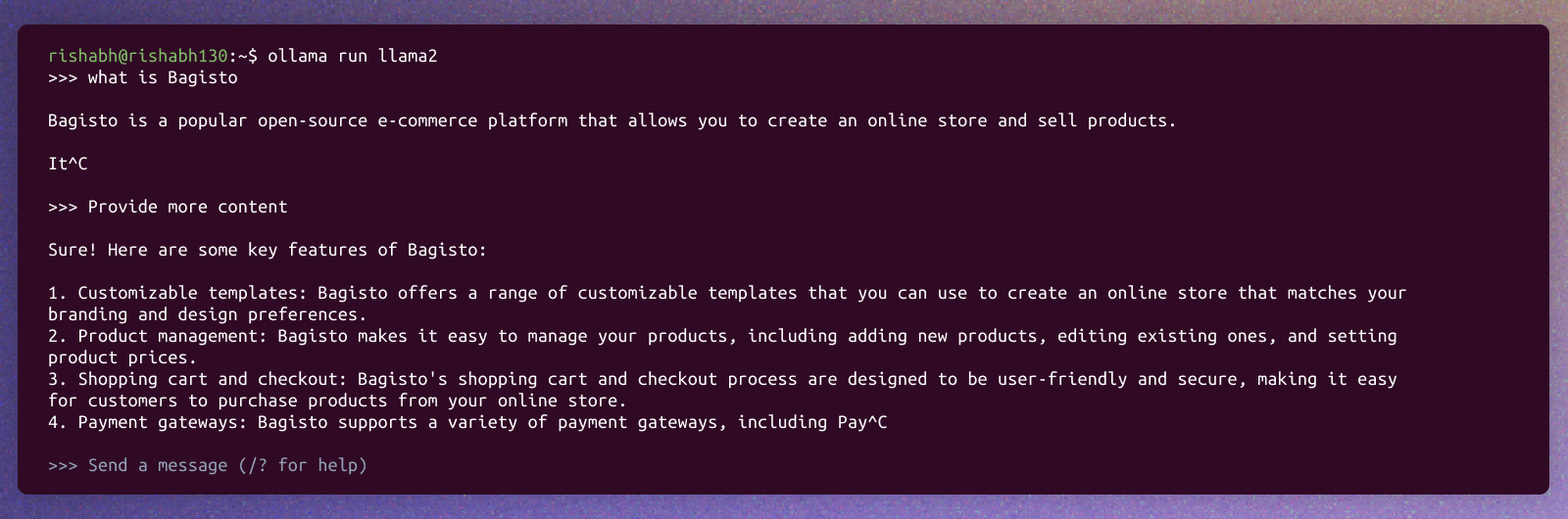

So after completing the pull command, you can run it directly in the terminal for text generation.

ollama run llama2

Ollama also offers a REST API for running and managing models.

See the API Documentation for the endpoints.

How can we use it in Laravel

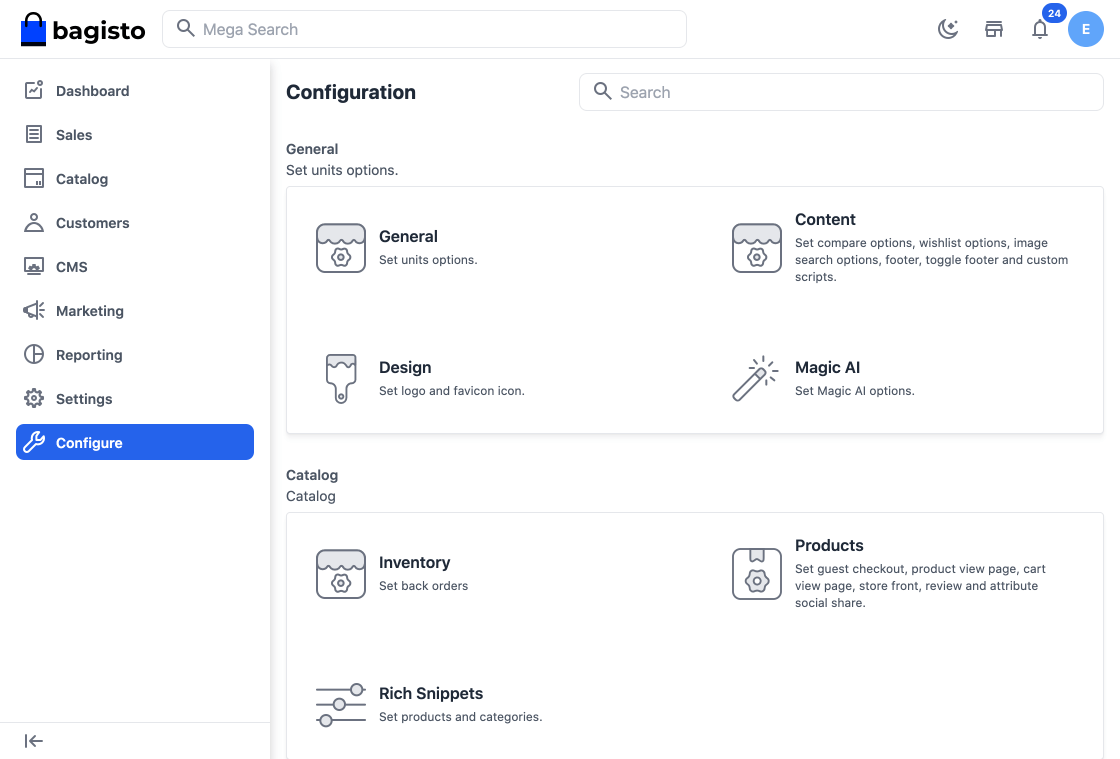

So we are going to showcase an example of Bagisto which is an open-source Laravel eCommerce framework that offers robust features, scalability, and security for businesses of all sizes. Combined with the versatility of Vue JS, Bagisto offers a seamless shopping experience on any device.

Step 1 – Login to the Admin Panel of Bagisto and go to Configure >> Magic AI as shown below.

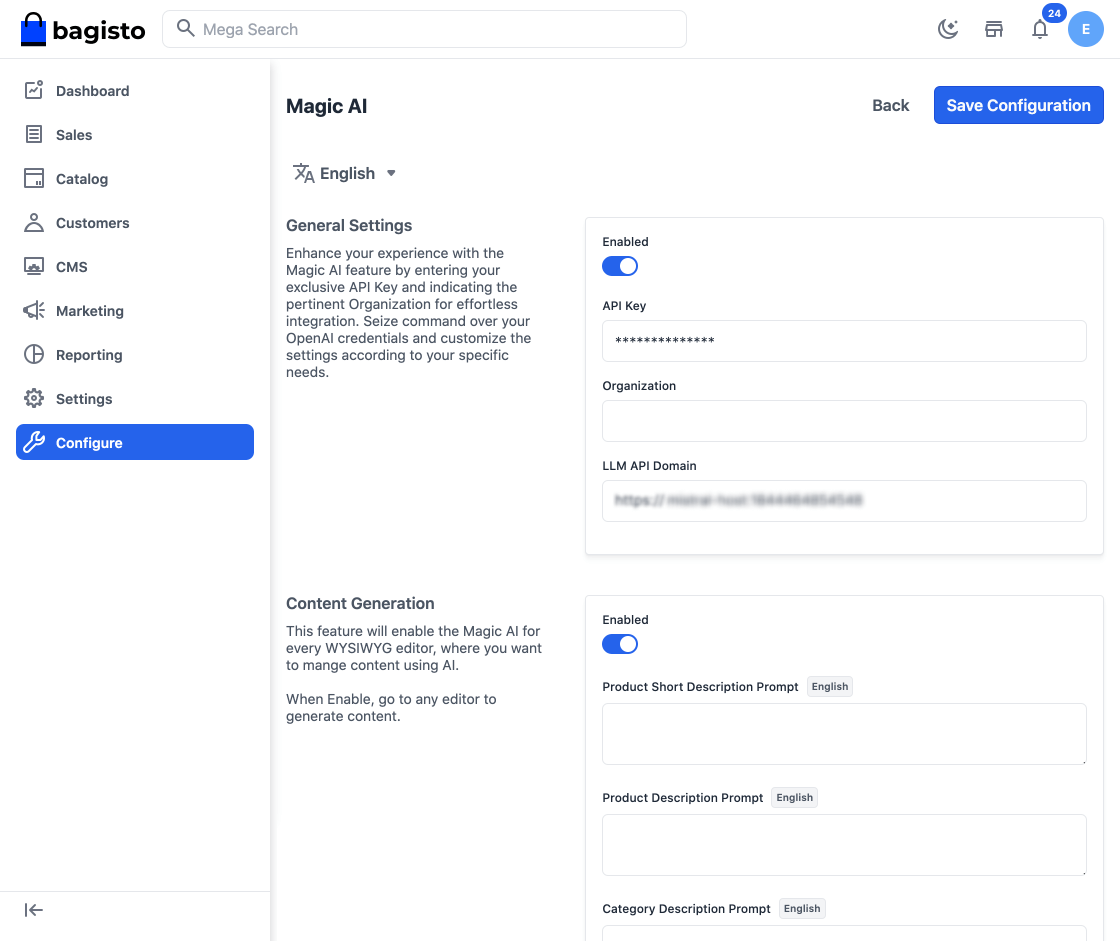

Step 2 – Now kindly Enable the Configurations for which you want to generate using Magic AI i.e. General Settings, Content Generation as shown in the below image.

Content Generation

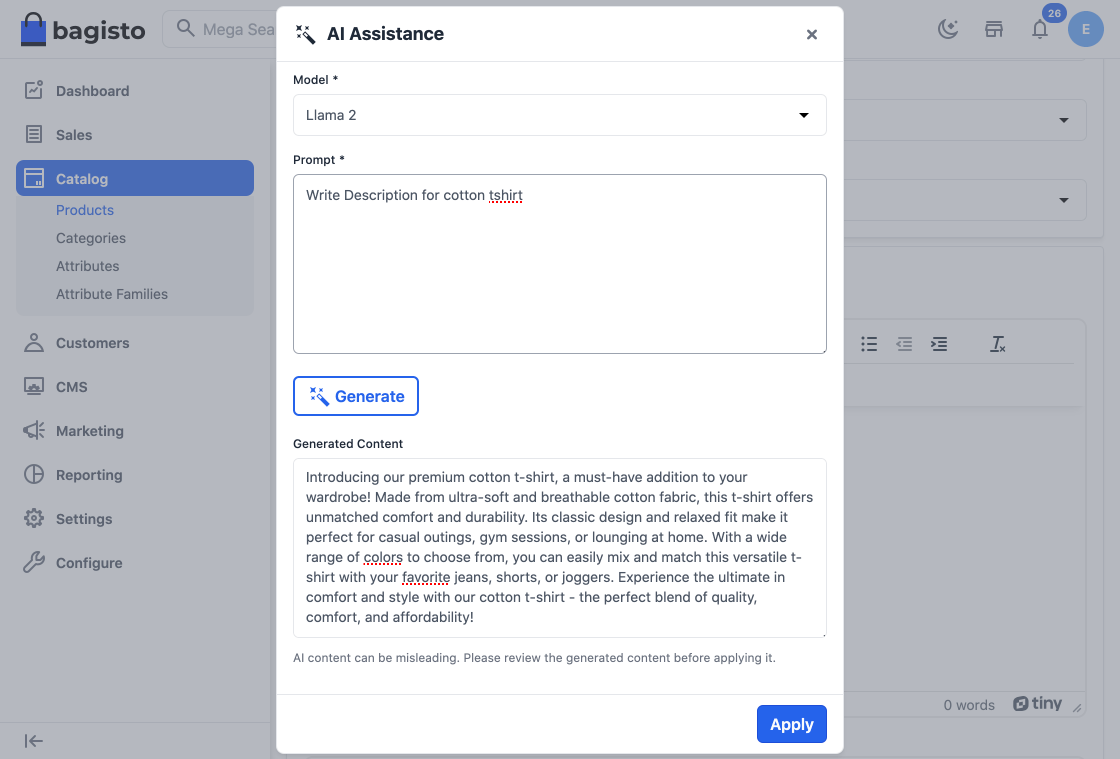

With Magic AI, store owners can effortlessly generate engaging product, category, and CMS content.

Note:- In Bagisto 2.1.0 it provides Native Support to various LLMs.

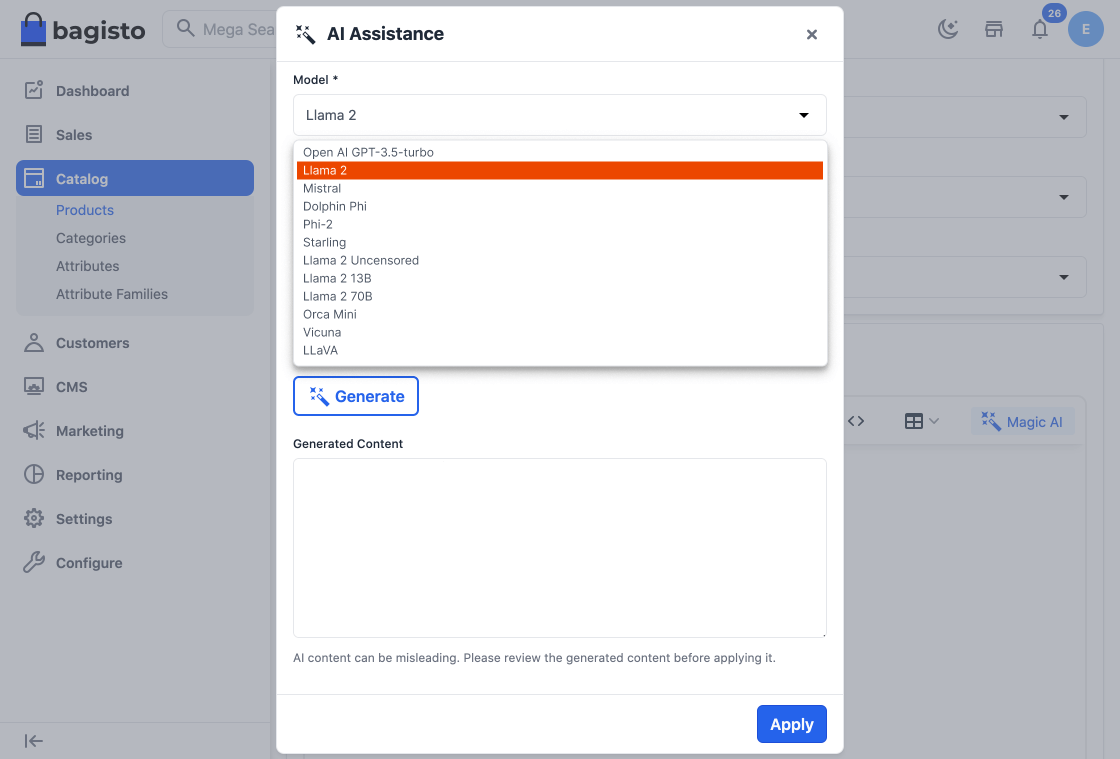

A) For Content – OpenAI gpt-3.5-turbo, Llama 2, Mistral, Dolphin Phi, Phi-2, Starling, Llama 2 Uncensored, Llama 2 13B, Llama 2 70B, Orca Mini, Vicuna, LLaVA.

So as you can see in the below image, content has been generated.

So now say goodbye to time-consuming manual content creation as Magic AI crafts compelling and unique descriptions, saving you valuable time and effort.

Thanks for reading this blog. Please comment below if you have any questions. Also, you can Hire Laravel Developers for your custom Laravel projects.

Hope it will be helpful for you or if you have any issues feel free to raise a ticket at our Support Portal

Be the first to comment.