Have you ever considered having a conversation with an intelligent computer? Or perhaps you’re interested in creating innovative projects with AI?

Well, take a look at Dolphin-Phi in Laravel! It’s the newest brilliant computer program developed by a company in France.

What is Dolphin-Phi

Dolphin Phi 2.6 is an uncensored model based on the 2.7B Phi model by Microsoft Research, using similar datasets as other versions of this model such as Dolphin Mixtral.

It was created by Eric Hartford and Cognitive Computations.

What is Ollama

Ollama allows you to run open-source large language models, such as Dolphin-Phi, locally.

Ollama bundles model weights, configuration, and data into a single package, defined by a Model file. It optimizes setup and configuration details, including GPU usage.

For a complete list of supported models and model variants, see the Ollama model library.

Setup

First, follow these instructions to set up and run a local Ollama instance:

1) Download and install Ollama onto the available supported platforms (including Windows Subsystem for Linux)

2) Fetch available LLM model via ollama pull <name-of-model>

-

- View a list of available models via the model library

3) This will download the default tagged version of the model. Typically, the default points to the latest, smallest-sized-parameter model.

On Mac, the models will be download to

~/.ollama/modelsOn Linux (or WSL), the models will be stored at

/usr/share/ollama/.ollama/models.View the GitHub Repository for more commands. Runollama helpin the terminal to see available commands too.

Overview of Dolphin-Phi AI Models

This model is uncensored. An uncensored, fine-tuned model based on the Mixtral mixture of experts model that excels at coding tasks.

Phi-2 is a 2.7 billion-parameter language model that demonstrates outstanding reasoning and language understanding capabilities, showcasing state-of-the-art performance among base language models with less than 13 billion parameters.

Key Features

1) Balance of cost and performance — One prominent highlight of Dolphin-Phi AI’s models strikes a remarkable balance between cost and performance. The use of sparse MoE makes these models efficient, affordable, and scalable while controlling costs.

2) Fast inference speed — Dolphin-Phi AI models have an impressive inference speed and are optimized for low latency. The models also have a low memory requirement and high throughput for their size. This feature matters most when you want to scale your production use cases.

3) Transparency and trust — Dolphin-Phi AI models are transparent and customizable. This enables organizations to meet stringent regulatory requirements.

4) Accessible to a wide range of users — Dolphin-Phi AI models are accessible to everyone. This helps organizations of any size integrate Generative AI Services into their applications.

Dolphin-Phi Installation

In Windows: https://ollama.com/download/windows

For Mac: https://ollama.com/download/mac

For Linux: Install it using a single command

curl -fsSL https://ollama.com/install.sh | sh

Ollama Supports a list of models available on Ollama Library

Please keep in mind that running the 7B models requires a minimum of 8 GB of RAM, while the 13B models need 16 GB, and the 33B models need 32 GB.

After Ollama installation, you can easily fetch any models using a simple pull command.

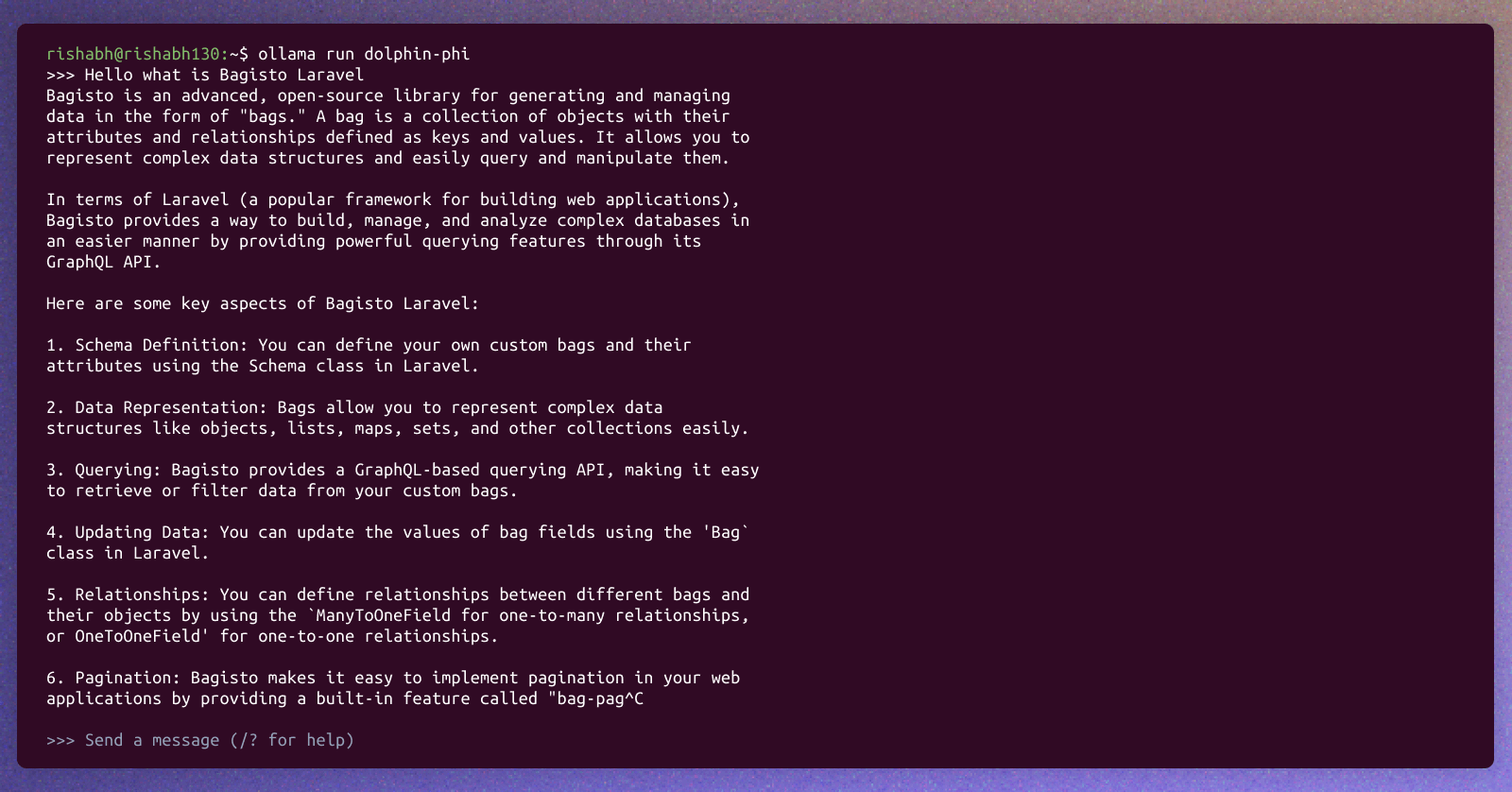

So after completing the pull command, you can run it directly in the terminal for text generation.

ollama run dolphin-phi

Ollama also offers a REST API for running and managing models.

See the API Documentation for the endpoints.

How can we use it in Laravel

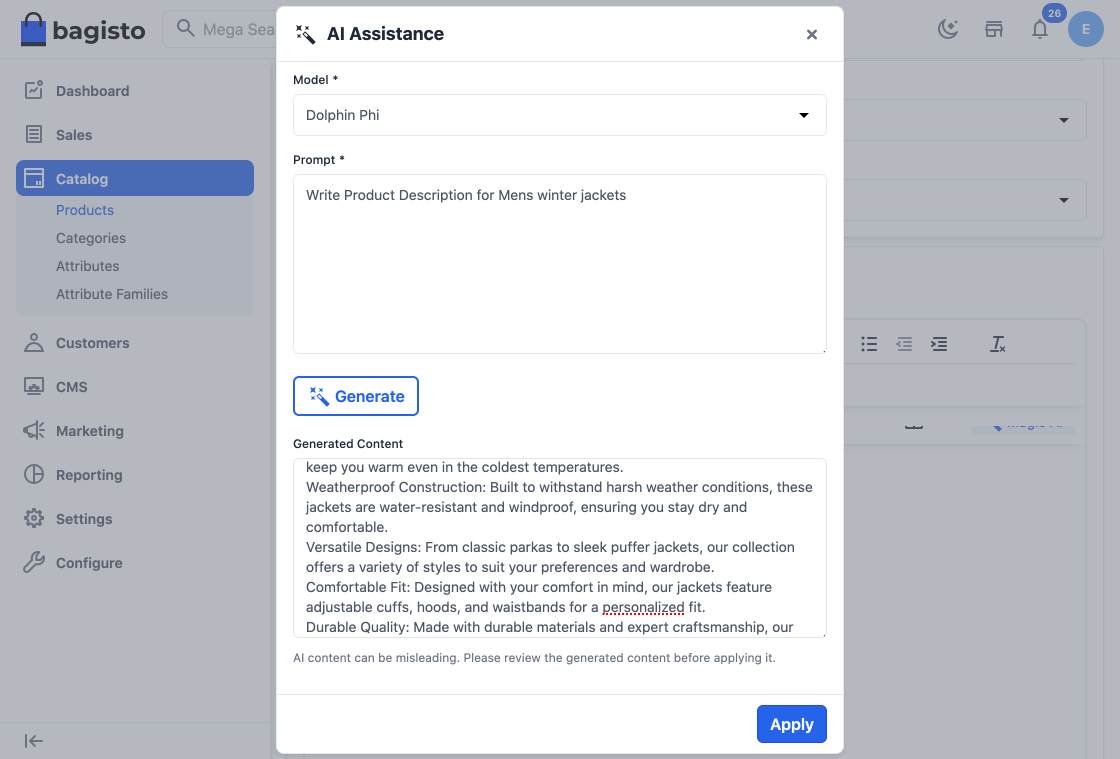

So we are going to showcase an example of Bagisto which is an open-source Laravel eCommerce framework that offers robust features, scalability, and security for businesses of all sizes. Combined with the versatility of Vue JS, Bagisto offers a seamless shopping experience on any device.

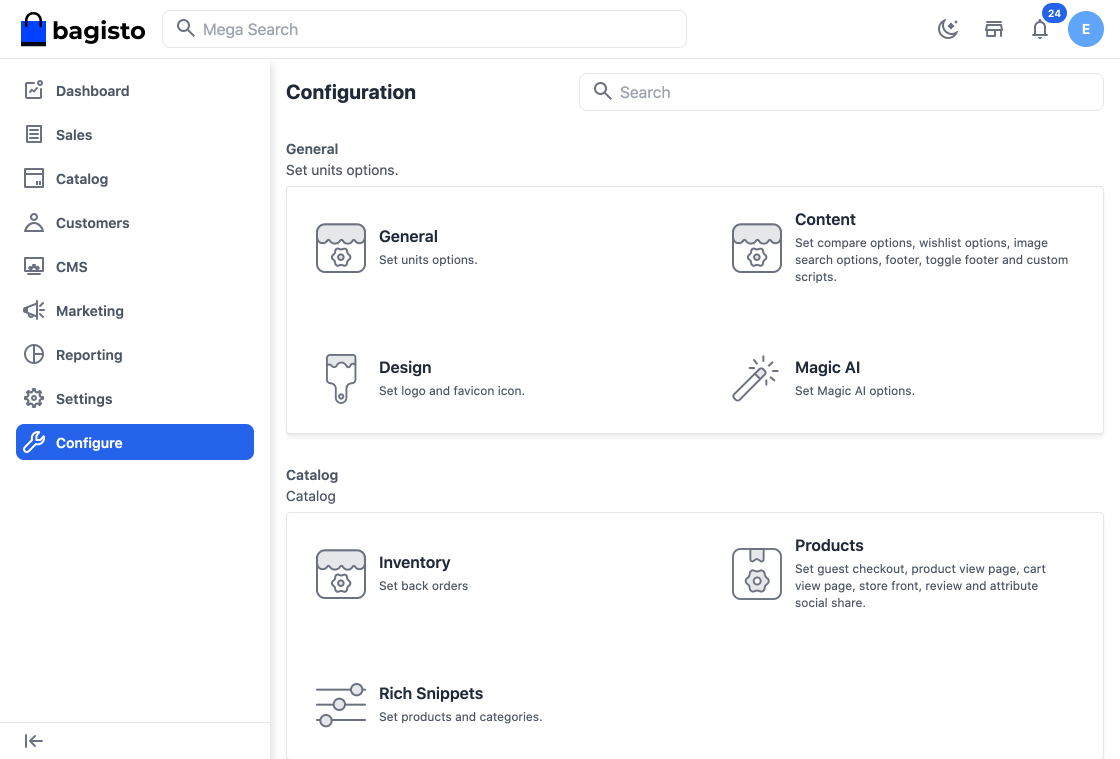

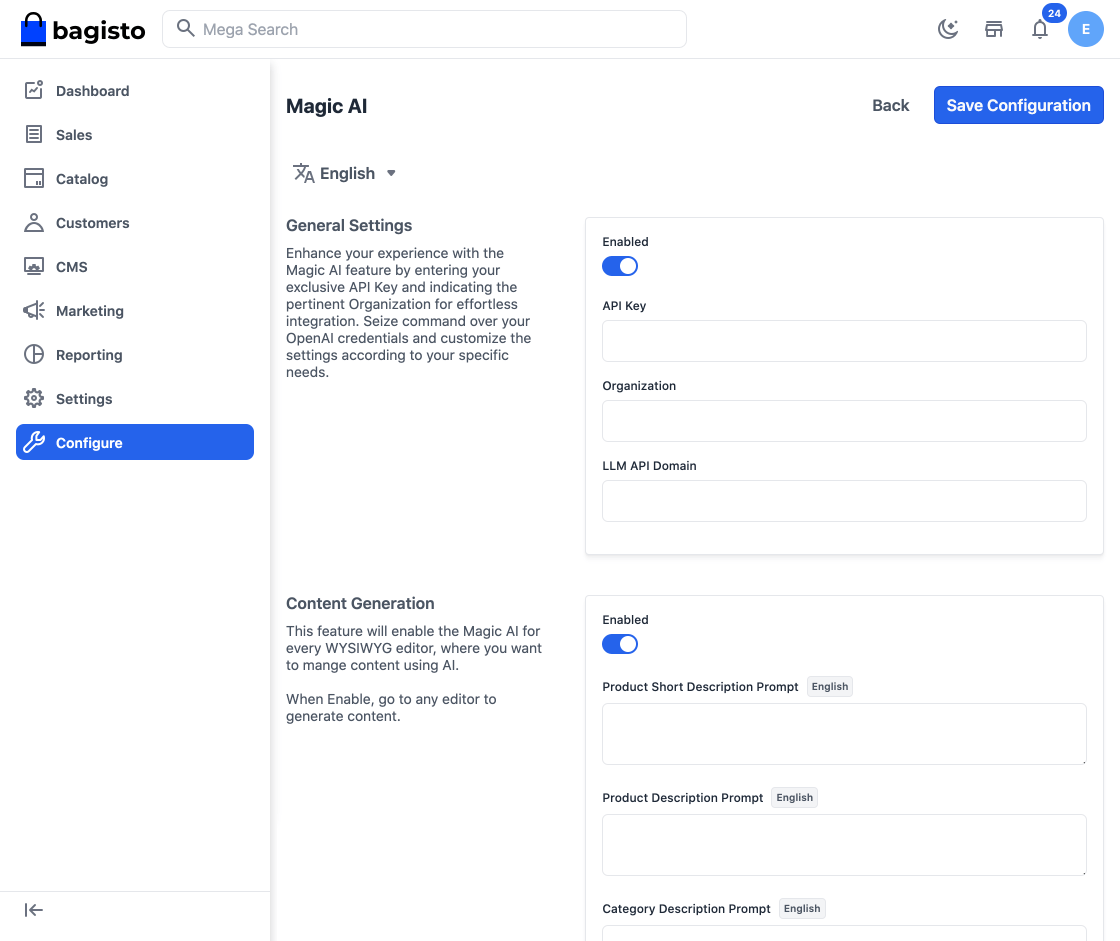

Step 1 – Login to the Admin Panel of Bagisto and go to Configure >> Magic AI as shown below.

Content Generation

With Magic AI, store owners can effortlessly generate engaging product, category, and CMS content.

Note:- In Bagisto 2.1.0 it provides Native Support to various LLMs.

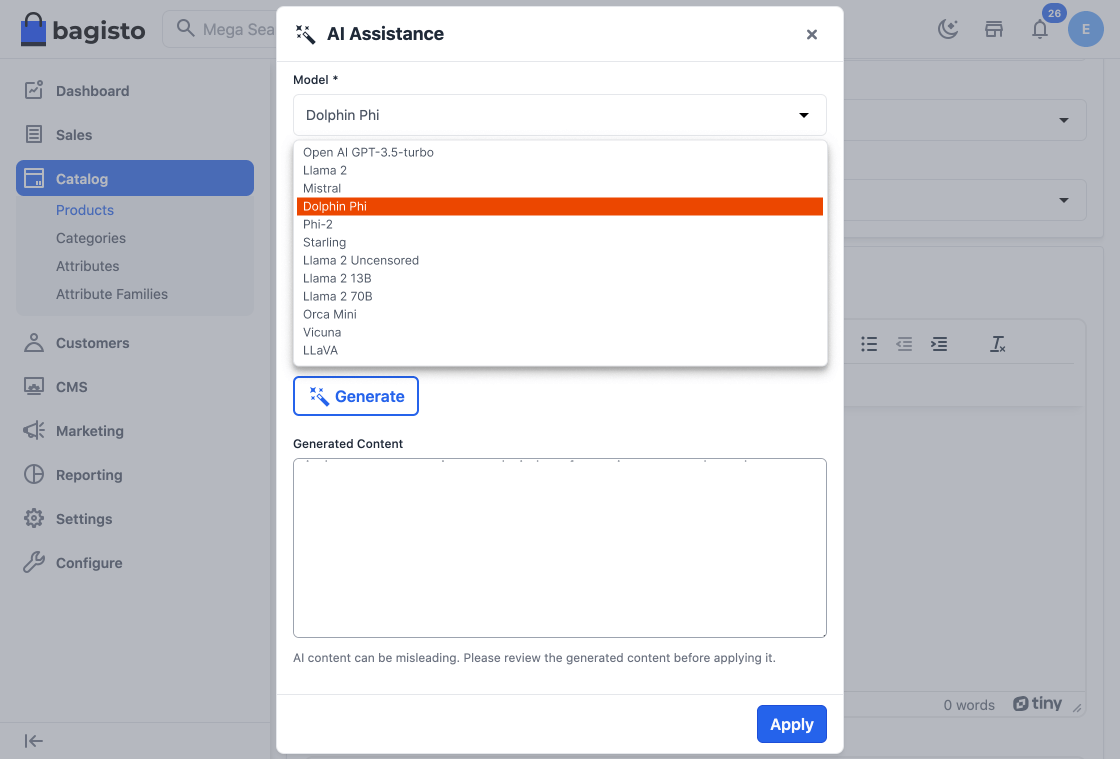

A) For Content – OpenAI gpt-3.5-turbo, Llama 2, Mistral, Dolphin Phi, Phi-2, Starling, Llama 2 Uncensored, Llama 2 13B, Llama 2 70B, Orca Mini, Vicuna, LLaVA.

So as you can see in the below image, content has been generated.

So now say goodbye to time-consuming manual content creation as Magic AI crafts compelling and unique descriptions, saving you valuable time and effort.

Thanks for reading this blog. Please comment below if you have any questions. Also, you can Hire Laravel Developers for your custom Laravel projects.

Hope it will be helpful for you or if you have any issues feel free to raise a ticket at our Support Portal

Be the first to comment.